Render Refraction Issues

03 February 2015 00:17

You guys are probably aware of this, but i was wondering if there was a workaround, or a fix for this…

A scene with objects that are close to another object with a material that has refractions eneabled, appear to have refraction issues like in the attached image. Also, the effect is greater the farther away the camera is from the refractive object.

Thanks in advanced!![smiling]()

A scene with objects that are close to another object with a material that has refractions eneabled, appear to have refraction issues like in the attached image. Also, the effect is greater the farther away the camera is from the refractive object.

Thanks in advanced!

03 February 2015 10:34

Our refraction is a fake - IRL this effect needs lots of operations and we just can't use it in realtime render absolutely correctly ![smiling]()

So actually refraction was made for using with normalmaps (water, mosaic glass, etc.) and it won't work very well on flat surfaces under an acute angle.![slightly-frowning]()

And what effect are you trying to achieve? Maybe there are workarounds![smiling]()

So actually refraction was made for using with normalmaps (water, mosaic glass, etc.) and it won't work very well on flat surfaces under an acute angle.

And what effect are you trying to achieve? Maybe there are workarounds

03 February 2015 12:31

I currently have just been turning off refraction with complex scenes. One scene has a sculpture model that includes multiple sheets of glass. Some of the glass is also curved, so the refraction is key to getting a photorealistic look. Similar to this : link

03 February 2015 16:56

Well, this is an interesting question. I tried some things, so look through those material setups - maybe you'll find something that will fit :)

The main problem is that we can't use raytracing algorithms in realtime rendering - so there are some things that must be sacrificed. Well, yeah, there actually are scenes with raytracing in webGL, but right now they are very heavy for computers.

Our refraction works like this: we render all the opaque objects behind the refractive object and then distort this render by factor that node Refraction contains. But the problem is - if we need to render several blend objects, we need to render all scene every time we use refraction. So in this example we needed to render the scene… 18 times!![astonished]() I don't think it could be fast enough x)

I don't think it could be fast enough x)

So other transparent objects will be "eaten" by the first refractive object.

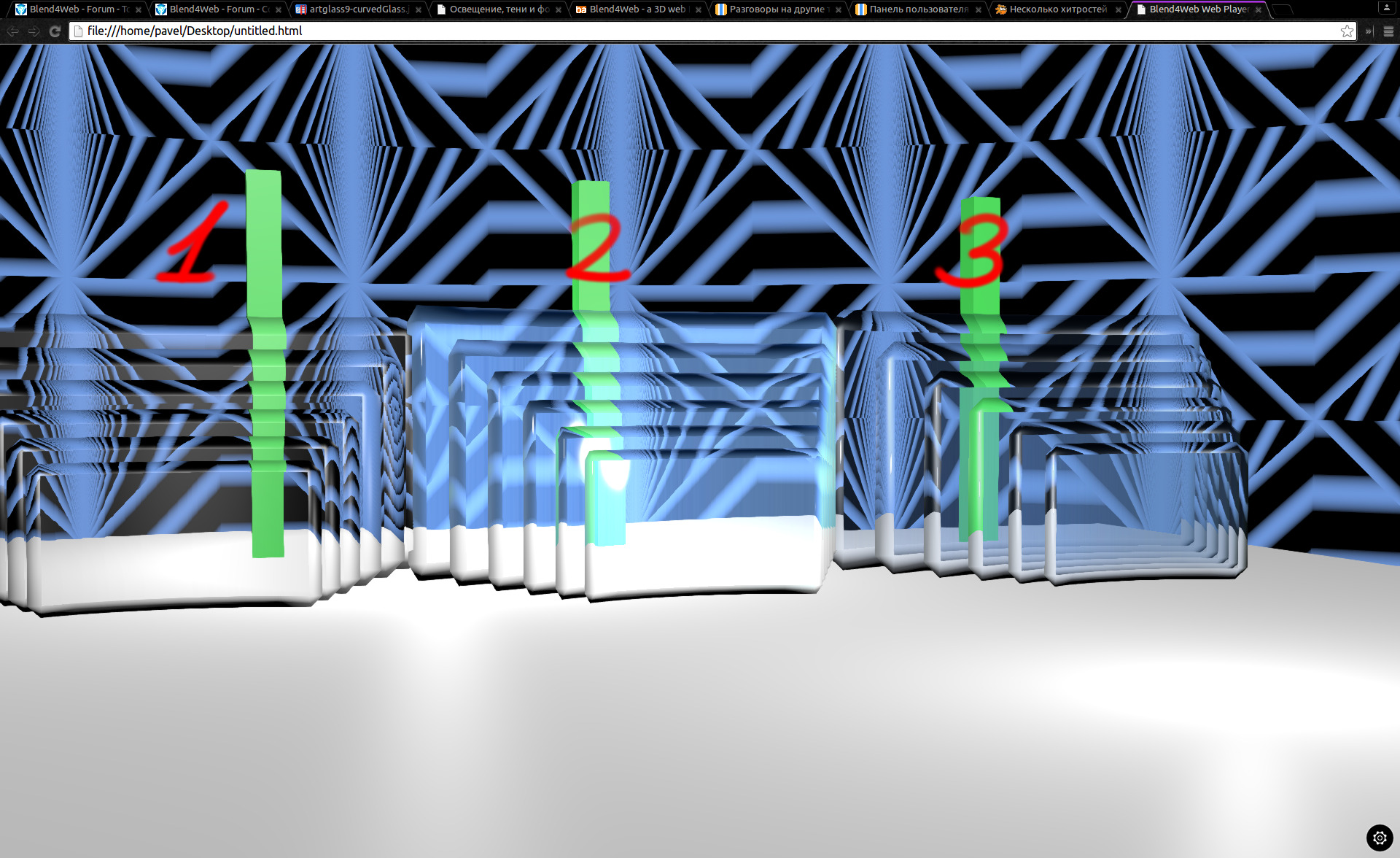

But I found some sort of a hack - look, the parts where refraction can actually be seen are bevels - so I made only them refractive. I am talking about third example (first one contains only refraction, second one refraction+some matcap, third one is refraction only on bevels+matcap)

![]()

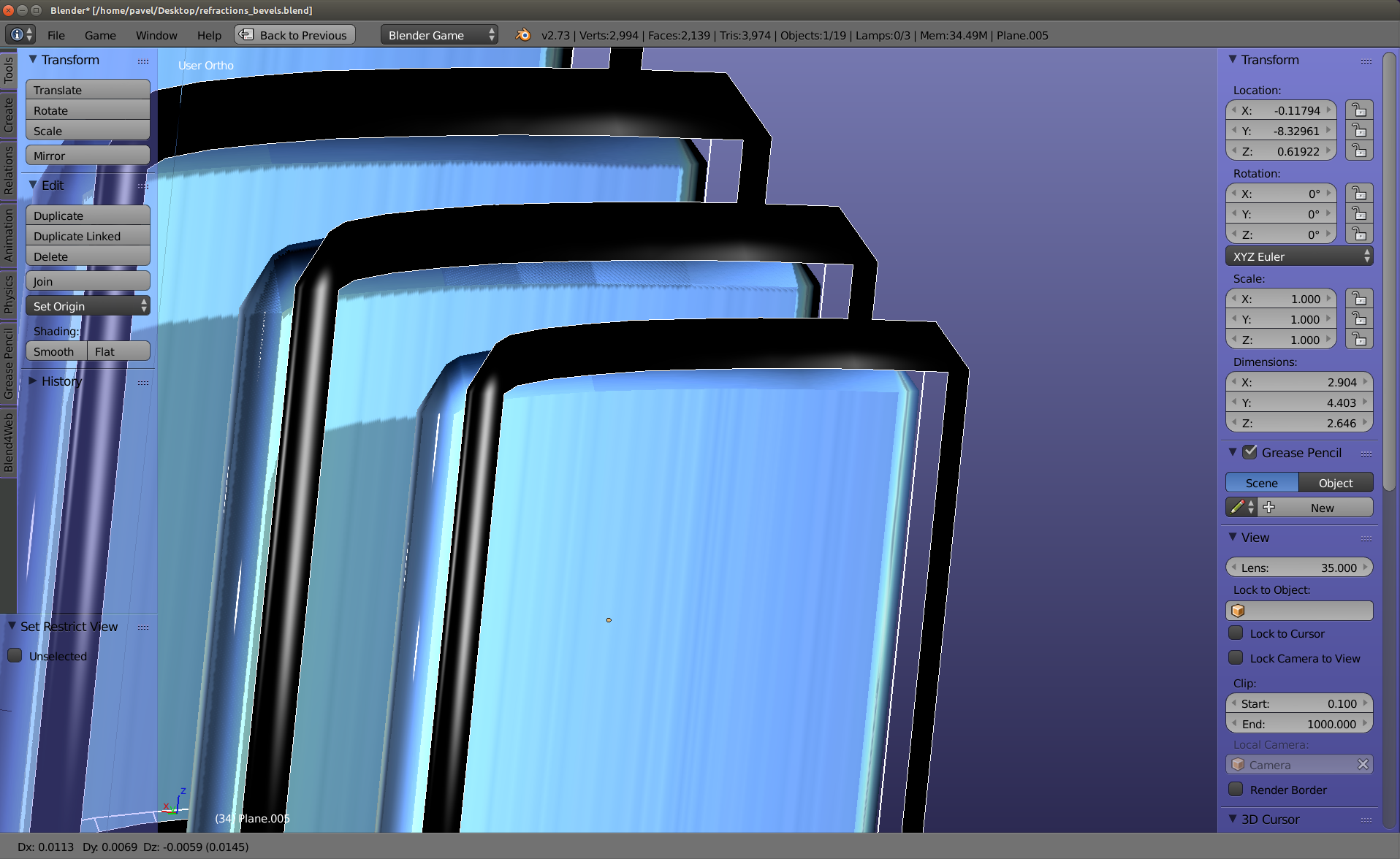

The trick is here: I made an ordinary transparent material and copied bevels. Those bevels I separated to another object and made them refractive - on the screenshot I moved this bevel to demonstrate it. And it actually somehow works! Look:

![]()

Hope it will help![smiling-open-mouth]()

.blend

.html

The main problem is that we can't use raytracing algorithms in realtime rendering - so there are some things that must be sacrificed. Well, yeah, there actually are scenes with raytracing in webGL, but right now they are very heavy for computers.

Our refraction works like this: we render all the opaque objects behind the refractive object and then distort this render by factor that node Refraction contains. But the problem is - if we need to render several blend objects, we need to render all scene every time we use refraction. So in this example we needed to render the scene… 18 times!

So other transparent objects will be "eaten" by the first refractive object.

But I found some sort of a hack - look, the parts where refraction can actually be seen are bevels - so I made only them refractive. I am talking about third example (first one contains only refraction, second one refraction+some matcap, third one is refraction only on bevels+matcap)

The trick is here: I made an ordinary transparent material and copied bevels. Those bevels I separated to another object and made them refractive - on the screenshot I moved this bevel to demonstrate it. And it actually somehow works! Look:

Hope it will help

.blend

.html